语言: 英文

文件大小: 206.34 MB

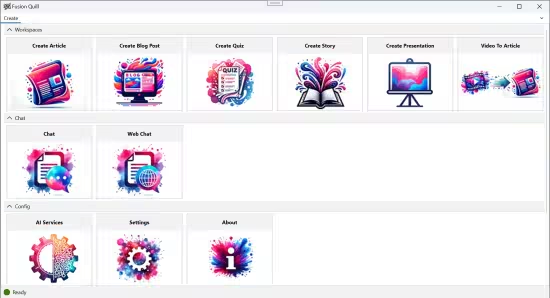

安全集成人工智能、用户体验、工作流与数据。想象一下Fusion Quill!——您的AI助手处理常规工作!Fusion Quill让AI全民可用,无需复杂提示词。通过直观的向导工作流、AI文字处理器和聊天界面,体验AI交互的未来。

融合AI、用户体验、工作流与数据

在Fusion Quill,我们坚信AI变革职场的力量,使其更高效、创新和包容。AI要真正发挥作用,必须无缝融入信息工作者的日常流程。

解决方案: 采用信息工作者熟悉的用户体验范式,与AI、工作流和数据深度融合

易用性

无需专业提示词知识

自带AI

支持连接任意AI模型 无绑定限制!

整合能力

通过将AI与现有数据及工作流连接,助力在不改动流程的情况下事半功倍

赋能增效

我们的目标是破除AI神秘性,使其真正为您所用,确保您能借此提升而非取代工作

支持本地模型与API模型

– 本地模型 – Hugging Face的GGUF格式模型

– API模型 – Open AI、Azure AI、Google Gemini、Amazon Bedrock、Groq、Ollama、Hugging Face、vLLM、llama.cpp等

– AI工作流自动化

– AI智能文档处理器

– AI对话系统

基础配置要求

– Windows 11或10系统且可联网

– 16GB内存

本地推理配置要求

– Windows 11(建议近两年生产机型)

– 推荐使用最新驱动的Nvidia/AMD显卡

– NVIDIA显卡需安装CUDA 11/12以加速本地大模型推理

– 32GB内存(16GB仅能支持低效文本生成)

– 迷你动画12GB磁盘空间,推荐24GB+

– 首次AI生成因模型加载需时较长

– 建议>50Mbps带宽用于大模型下载

* 纯本地运行需下载GGUF/Onnx模型,请确保充足磁盘空间和高速网络。推荐配置:NVIDIA RTX 3000系显卡(或同级AMD显卡)搭配12代+酷睿/锐龙处理器及32GB以上内存以获得最优性能。

官方网站

Languages: English

File Size: 206.34 MB

Securely Integrating AI, User Experience, Workflow with your Data. Imagine Fusion Quill! – Your AI Wizard handling mundane work! Fusion Quill makes AI accessible to everyone, eliminating the need for complex prompting. Experience the future of AI interaction with Fusion Quill’s intuitive Wizard Workflows, AI Word Processor and Chat User Interfaces.

Bringing Together AI, User Experience, Workflow, and Your Data

At Fusion Quill, we believe in the power of AI to transform the workplace, making it more efficient, innovative, and inclusive. For AI to truly make an impact, it needs to seamlessly blend into the daily workflows of information workers like you.

Our Solution: We use User Experience Metaphors that information workers are used to and integrate them with AI, Workflows and your Data .

Accessibility

No specialized AI Prompting knowledge required.

BYOAI

Connect to Any AI model. No Lock-in!

Integration

By connecting AI with your existing data and workflows, we empower you to achieve more without overhauling your processes.

Empowerment

Our goal is to demystify AI and put its power into your hands, ensuring you can leverage it to enhance your work, not replace it.

Fusion Quill connects to Local Models and API models.

– Local Models – GGUF Models from Hugging Face.

– API Models – Open AI, Azure AI, Google Gemini, Amazon Bedrock, Groq, Ollama, Hugging Face, vLLM, llama.cpp, etc.

– AI Workflows

– AI Word processor

– Chat with AI

Basic Requirements

– Windows 11 or 10 PC with a Internet connection

– 16GB RAM

Requirements for Local Inference

– Windows 11 (preferably manufactured in the last 2 years)

– Nvidia or AMD GPU preferred with updated GPU Driver.

– CUDA 11 or 12 installation for faster AI on Nvidia GPUs for Local LLMs.

– 32 GB Memory (Text Generation works with16GB but is slow)

– 12 GB min disk space, 24+ GB recommended.

– First time AI generation is slow due to Model loading time

– Fast internet connection is recommended to download huge AI models ( > 50 Mbps)

* For a truly local experience, download the local AI model (GGUF and Onnx models). Ensure your system is ready with adequate disk space and a high-speed internet connection for a smooth setup. For optimal Local AI performance, we recommend using a NVIDIA RTX 3000 Series GPU (or AMD equivalent) combined with an Intel/AMD 12th+ generation CPU and at least 32 MB of RAM.